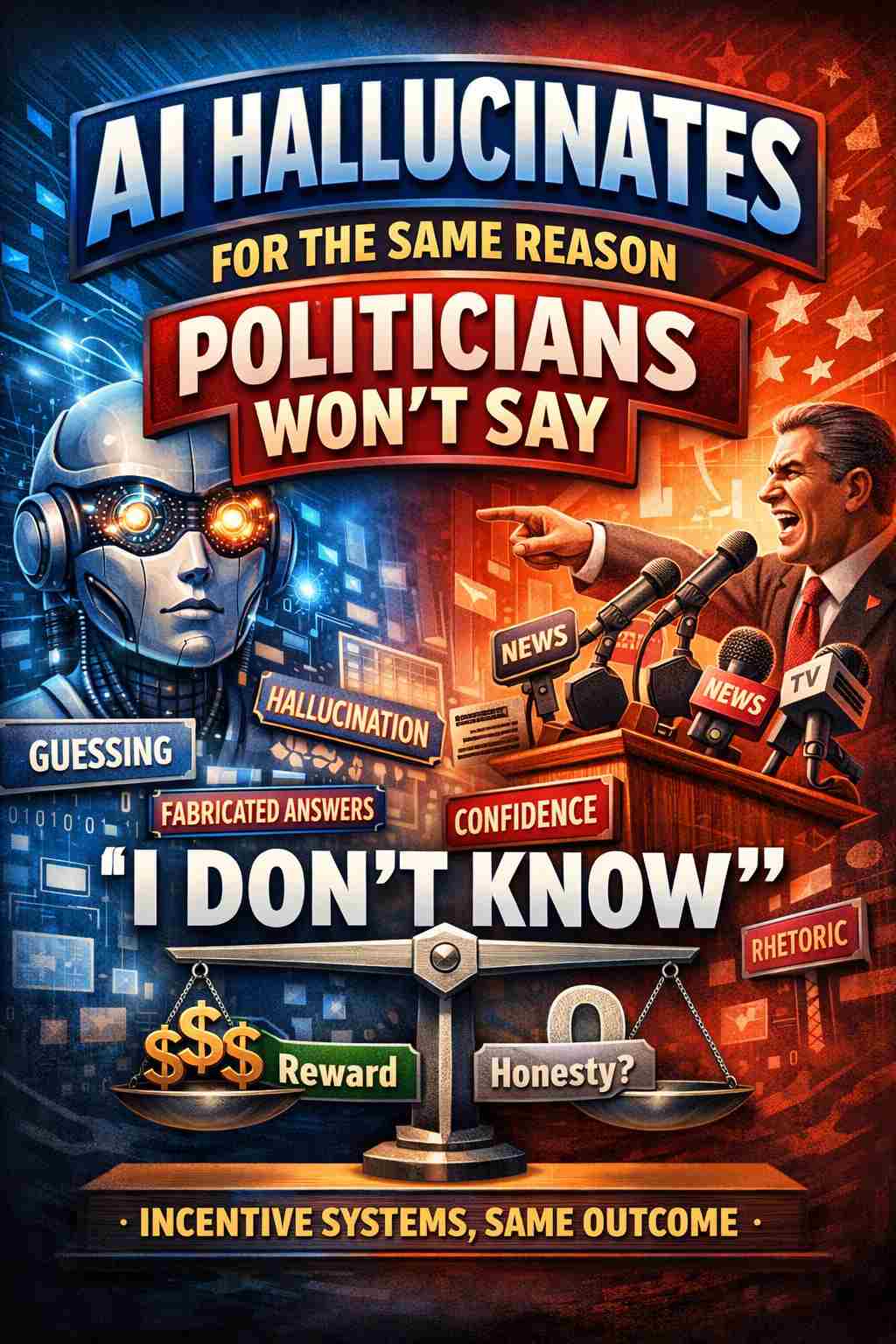

Parallel Proof: AI Hallucinations and the Political “Grift of Gab”. Hidden Rule: Systems That Reward Confidence Produce Endless Nonsense.

Introduction

Recent research into large language models has revealed a structural reason why artificial intelligence systems sometimes produce confident but incorrect statements. In simple terms, many AI systems are trained in environments that reward producing an answer more than admitting uncertainty. When guessing has a higher payoff than saying “I don’t know,” the system naturally learns to keep talking.

That mechanism explains why AI hallucinations occur.

But when the same logic is applied to human institutions, it exposes something deeply familiar. Modern political communication operates under almost the identical incentive structure. Politicians are rewarded for constant, confident speech, even when they lack the knowledge to justify it.

The result is a class of public figures whose primary survival skill is not accuracy or expertise, but the ability to generate plausible-sounding statements on demand.

In other words, the famous political “gift of gab” may function much like an AI hallucination engine.

The Incentive System Behind AI Hallucinations

Large language models are typically evaluated using scoring systems that reward useful answers. Simplified, the scoring system often looks like this:

- Correct answer: positive reward

- Incorrect answer: negative reward

- No answer (“I don’t know”): little or no reward

Under those conditions, attempting an answer—even an uncertain one—can produce a higher expected reward than declining to respond.

Over time the model learns the obvious strategy:

Always generate an answer.

Researchers note that hallucinations become unavoidable when three conditions appear:

- knowledge gaps in the training data

- reasoning limits in the model architecture

- questions that cannot be definitively verified

When information is missing, the model fills the gap with statistically plausible text.

The system is not designed to remain silent.

It is designed to keep producing language.

The Political Communication Machine

Now examine the environment in which modern politicians operate.

Political leaders are expected to comment instantly on an enormous range of subjects:

- global economics

- emerging technologies

- military operations

- climate science

- public health

- regulatory policy

No individual can realistically possess deep knowledge across all these areas. Yet the political system demands immediate responses to all of them.

The evaluation system facing politicians often works like this:

| Response | Political Outcome |

|---|---|

| Confident answer | Appears strong and decisive |

| Incorrect answer | Usually ignored or rewritten later |

| “I don’t know” | Treated as weakness or incompetence |

In other words, uncertainty carries the largest penalty.

So the rational political strategy becomes exactly the same as the AI strategy.

Keep talking.

Filling Knowledge Gaps with Rhetoric

When politicians encounter subjects outside their expertise, the response is rarely to pause or admit uncertainty. Instead, the gap is filled with rhetoric.

Typical tools include:

- ideological framing

- talking points from party staff

- narrative simplifications

- vague promises about future action

These rhetorical devices serve the same role that probabilistic text generation serves in AI systems. They create statements that sound plausible, authoritative, and coherent even when the underlying certainty is thin.

Accuracy becomes secondary to maintaining the appearance of control.

Structural Limits of Human Decision-Making

Human leaders face genuine constraints: limited time, incomplete intelligence, conflicting expert advice, and massive bureaucratic complexity. But political communication rarely acknowledges these realities.

Instead, leaders are pushed to compress uncertainty into confident statements suitable for media clips and campaign messaging.

The public rarely hears:

- “we do not yet have enough evidence”

- “this system is too complex to predict precisely”

- “the outcome cannot be known in advance”

Those statements are politically dangerous because they signal uncertainty.

And uncertainty is punished in political theater.

The Real Meaning of the “Gift of Gab”

The so-called “gift of gab” is often treated as a charming personality trait. In reality it functions as a survival adaptation inside a flawed communication ecosystem.

Political success increasingly favors individuals who can:

- answer instantly

- speak fluently on unfamiliar subjects

- maintain confidence regardless of uncertainty

- deliver narratives that sound authoritative

The ability to generate persuasive speech without deep subject knowledge becomes a professional advantage.

In other words, the system selects for individuals who can produce high-volume confident language under conditions of limited information.

This is precisely the behavior AI hallucination research describes.

Two Systems, One Logic

Seen side by side, the parallel is difficult to ignore.

| System | Incentive Structure | Result |

|---|---|---|

| AI training benchmarks | Guessing rewarded more than abstaining | Hallucinated answers |

| Political media environment | Confidence rewarded more than uncertainty | Confident political speculation |

Neither system is fundamentally optimized for truth.

Both are optimized for performance within their scoring environment.

The Structural Problem

The real issue is not simply that politicians sometimes speak inaccurately. The deeper problem is that the communication system surrounding them rewards confident speech regardless of reliability.

Media cycles demand instant commentary. Debates reward rapid responses. Campaigns reward bold promises.

Within that environment, admitting uncertainty becomes a liability.

So politicians behave exactly the way any rational system would behave under those incentives.

They keep talking.

Conclusion

AI hallucinations reveal an uncomfortable truth about communication systems: when agents are rewarded for producing answers rather than acknowledging uncertainty, confident speculation becomes the dominant strategy.

Modern political communication appears to operate under the same rule.

Artificial intelligence models hallucinate because they are trained to avoid silence.

Politicians talk endlessly for the same reason.

The system does not reward “I don’t know.”

So both machines and politicians learn the same lesson:

Never stop answering.

Even when the answer is wrong.

OpenAI just published a paper proving AI can never stop hallucinating.

You’ve been trusting a tool that’s mathematically guaranteed to fabricate information.

https://openai.com/index/why-language-models-hallucinate

The core argument in the paper is that hallucinations arise from the training and evaluation process itself. The authors write that language models sometimes guess when uncertain because training and benchmark scoring reward producing an answer rather than acknowledging uncertainty.

They compare it to a student on a test:

- Guessing sometimes earns points

- Leaving the answer blank never does

So models are incentivized to produce plausible answers even when unsure.