“AI can hold a 200k token context window (150k words) in a form of attention that allows constant cross-referencing across that entire input length… This isn’t intelligence in the human sense, it’s something different: Comprehensive pattern matching across a very large context window, with the ability to apply consistent rules without fatigue or forgetfulness — now look at what that means for the entropy (“rot”) problem…” 11:58 https://www.youtube.com/watch?v=NoRePxSrhpw

“Our goal is simply to put AI in the parts of the [pipeline] where humans were going to lose to entropy anyway.” 21:19 https://www.youtube.com/watch?v=NoRePxSrhpw

Transcript

Click to reveal

0:00

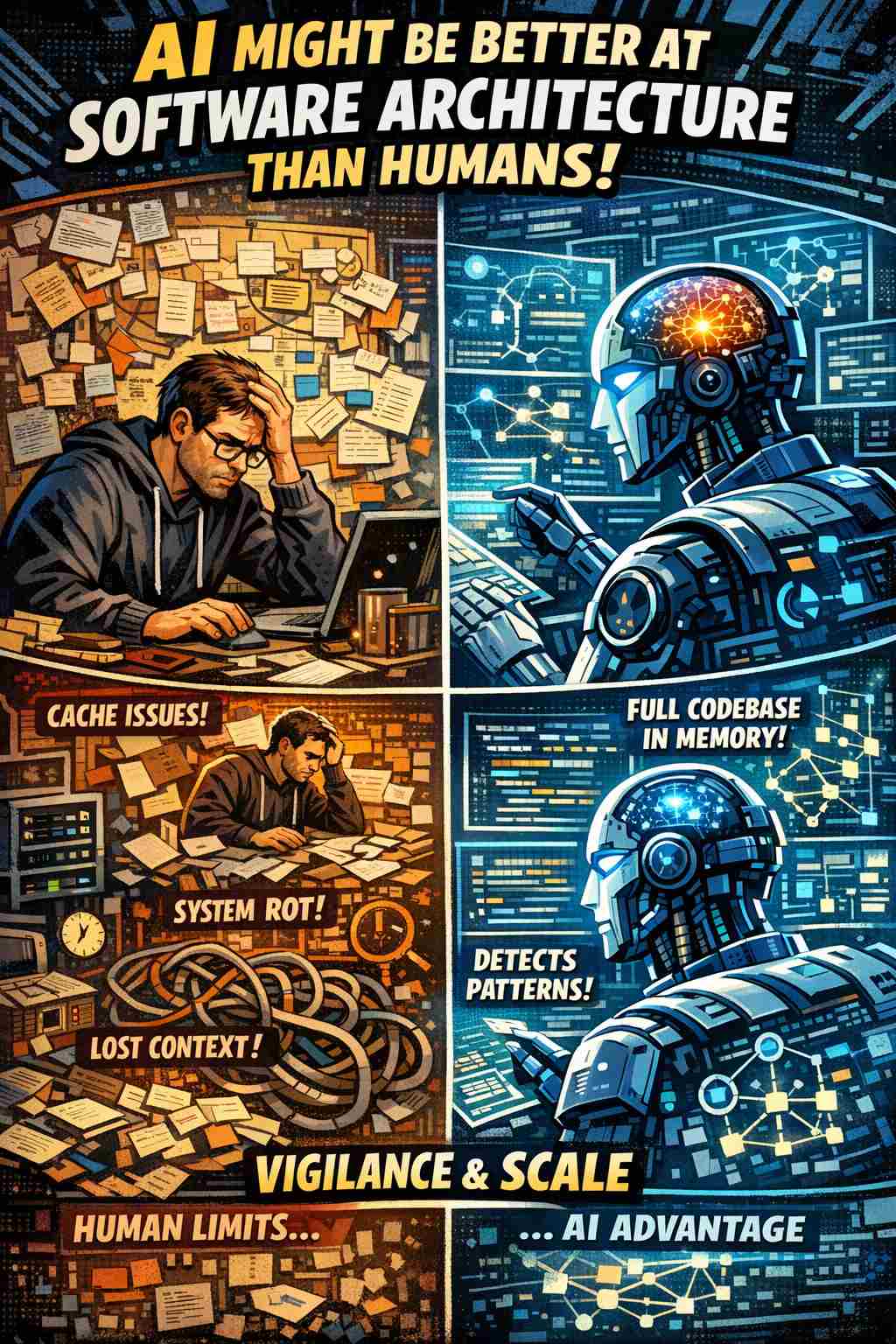

AI might be better at software

0:01

architecture than humans. Not because AI

0:04

is smarter, but because humans are

0:06

structurally incapable of the kind of

0:08

vigilance that good scaled technical

0:11

architecture requires. That's a very

0:13

strong claim and it cuts against

0:14

everything we've been told typically. So

0:16

the conventional wisdom for a couple of

0:18

years now has been AI is bad at

0:21

technical architecture because

0:22

architecture requires holistic thinking,

0:24

creative judgment, wisdom accumulated

0:27

over the years. And architecture is

0:29

supposedly one of the last bastions of

0:32

human engineering, the domain where

0:34

experience and intuition matter the

0:36

most. But here's what I keep noticing.

0:38

When engineers describe their

0:41

architectural failures, the performance

0:43

that degraded over months or caching

0:45

layers that broke quietly or technical

0:47

deck that kept accumulating despite

0:49

everybody's best intentions, the root

0:51

cause is almost never bad architectural

0:54

judgment. It's almost always lost

0:57

context. The information needed to

0:59

prevent the problem did exist. It was

1:02

just spread across too many files, too

1:04

many people, too many moments in time.

1:06

No single human mind could hold it all

1:09

at once. The original architectures are

1:11

often fine. The engineers are competent.

1:13

The code reviews are typically thorough.

1:15

But somewhere between the initial design

1:16

and the daily reality of shipping

1:18

features to production, systems rot. And

1:21

every individual change can make sense

1:22

and everything can pass review. And yet

1:24

together we get into a position where we

1:26

create messes that no single person saw

1:29

coming. It's sort of a tragedy of the

1:31

commons written in architectural

1:33

failure. It's not a dramatic collapse.

1:35

feels more like a slow rot and it

1:37

doesn't mean that people are bad

1:39

engineers. Good engineers operating

1:41

under human cognitive constraints can

1:43

still get into this situation. So I

1:45

wanted to ask a provocative question.

1:47

What if we've been thinking about all of

1:49

this backwards? What if there are

1:51

specific dimensions of architectural

1:54

work where AI isn't just adequate but

1:56

structurally superior to humans? Not

1:58

because of intelligence, but because of

2:02

attention span, memory, and the ability

2:04

to hold an entire codebase sort of in

2:07

mind while evaluating a single line

2:09

change. And increasingly, as you get

2:10

larger and larger context windows and

2:12

searchable context, that becomes a more

2:14

viable mental model to imagine for our

2:16

AI agents. This is not a palemic about

2:20

AI replacing architects. Architects

2:22

still have key role as you'll see. It's

2:24

actually an attempt to reason backwards

2:27

from key principles that underly

2:29

architecture and understand where

2:31

cognitive advantages actually lie for

2:33

humans and AI in the space and think

2:36

about what that means for how we build

2:38

software together as AI partners with us

2:41

in 2026 and beyond. So step in with me

2:44

let me start with a piece that's been

2:46

circulating in engineering circles

2:48

recently. Ding, who spent roughly 7

2:50

years at Versel doing performance

2:52

optimization work, has opened roughly

2:54

400 poll requests focused on

2:56

performance. And we know this because he

2:58

wrote about it. And about one in every

3:00

10 of the ones he's submitted is

3:02

crystallizing a problem for him that

3:04

I've seen across every large engineering

3:06

organization I've worked with. And his

3:08

thesis is that performance problems

3:10

aren't technical problems. They're

3:12

actually entropy problems. And I think

3:13

that's a profound insight. The argument

3:15

goes like this. Every engineer, no

3:17

matter how experienced, can only hold so

3:20

much in their head. Modern code bases

3:22

grow exponentially. Dependencies, state

3:24

machines, async flows, caching layers.

3:27

So the codebase grows faster than a

3:29

given individual can track. This is even

3:31

more true in the age of AI. So engineers

3:34

shift their focus between features.

3:36

Context will fade. It'll fade even

3:37

faster in the age of AI. And as the team

3:40

scales, knowledge becomes distributed

3:42

and diluted. And so his framing his

3:44

framing just sticks in your head. So he

3:46

wrote, "You cannot hold the design of

3:48

the cathedral in your head while laying

3:50

a single brick." I think that's really

3:52

true. And it's going to be more true if

3:54

we imagine a world where it's AI agents

3:56

everywhere laying those bricks for the

3:58

cathedral. And here's where it gets

3:59

interesting. The same mistakes keep

4:02

appearing across different organizations

4:03

and code bases. We have faster

4:05

frameworks now. We have better

4:07

compilers. We have smarter llinters. We

4:08

have AI agents. But entropy is not a

4:11

technical problem that you can patch.

4:13

It's a systemic problem that emerges

4:15

from the mismatch between human

4:17

cognitive architectures and the scale of

4:20

modern software systems. And we tell

4:22

ourselves if engineers can pay

4:23

attention, if engineers can write better

4:25

code, the application will just work.

4:28

Good intentions do not scale. It's not

4:31

because engineers are careless. It's

4:32

because the system allows degradation.

4:35

So entropy wins not through malice and

4:37

not through incompetence, but through

4:38

the accumulation of local reasonable

4:41

decisions that nobody saw adding up to

4:43

systemic problems. Let's let's make this

4:46

tangible with examples from production

4:48

code bases. Example one, abstraction

4:51

conceals cost. So a reusable pop-up hook

4:54

that looks perfectly clean and adds a

4:56

global click listener to detect when

4:58

users click on a popup on your website.

5:00

It's a reasonable implementation, but

5:03

the abstraction hides something

5:05

critical. Every single instance adds a

5:08

global listener. So if you have a 100

5:10

popup instances across your application,

5:13

and you do on complicated websites,

5:15

that's a 100 callbacks firing on every

5:17

single click anywhere in the website.

5:20

The technical fix is easy. You just

5:22

dduplicate the listeners. But the real

5:25

problem is systemic. Nothing in the

5:27

codebase prevents this pattern from

5:30

spreading. Next time, the engineer

5:32

reusing the hook has no way to know the

5:34

cost until users complain about sluggish

5:37

performance in production. The

5:38

information needed to make a better

5:40

decision does exist. It's just invisible

5:42

at the point where decisions are made.

5:44

Example number two, fragile

5:45

abstractions. Let's say an engineer

5:47

extends a cacheed function by adding an

5:50

object parameter. Reasonable change, you

5:52

add a parameter, you can extend the

5:53

functionality. The code compiles and the

5:56

test passes and everything looks good.

5:58

But every call ends up creating a new

6:01

object reference which means the cache

6:04

never hits. It's completely broken

6:06

silently. The technical knowledge to do

6:08

this correctly exists in the

6:10

documentation. The systemic problem is

6:12

that nothing enforces that

6:14

documentation. Type safety doesn't help.

6:17

It won't get caught with a llinter. The

6:19

cache just quietly stops working and

6:22

nobody notices until someone profiles

6:24

the app months later. Example three.

6:27

Let's say an abstraction grows opaque or

6:30

hard to see through. Say a coupon check

6:32

gets added to a function that processes

6:34

orders. The engineer is solving a local

6:36

problem. I have to add coupon support.

6:38

My product manager told me to. So they

6:40

add an await for the coupon validation.

6:42

It seems reasonable, but the function is

6:44

a thousand lines long. It's built by

6:46

multiple people. The coupon check now

6:48

blocks everything below it, creating a

6:50

waterfall where sequential operations

6:52

could have run in parallel. The engineer

6:54

adding the check isn't thinking about

6:56

global asynchronous flows in checkout.

7:00

They can't see how the that flow because

7:02

it's spread across hundreds or thousands

7:04

of lines of code written by people who

7:06

no longer work there. The optimization

7:08

is technically possible. But the

7:11

information needed to see the

7:12

opportunity exists only if you can hold

7:15

the entire function of checkout in your

7:18

head while understanding the performance

7:21

implications. And because of the way

7:23

human organizations work and the way

7:25

code is built and distributed, and this

7:27

is even more the case in the age of AI,

7:29

nobody can hold all of that in there.

7:31

Example four, optimization without

7:32

proof. An engineer memorizes a property

7:36

access wrapping condition. They've

7:38

learned that memoization optimizes

7:41

expensive work, but reading a particular

7:44

property is instant. And so they create

7:46

a memoization closure. Tracking

7:49

dependencies and comparing on every

7:52

render is actually more expensive than

7:54

just reading them. Example four,

7:56

optimization without proof. An engineer

7:59

applies a performance optimization to a

8:01

piece of code. They've learned that this

8:02

technique, it's called memoization,

8:04

speeds things up by remembering results

8:06

instead of recalculating them. That's a

8:09

great instinct, but the operation

8:11

they're optimizing was already instant.

8:13

It's like installing a complicated

8:15

caching system to remember that 2 plus 2

8:17

is four. The overhead of the system now

8:19

takes longer than just doing the

8:21

original calculation. The engineer

8:23

applied a best practice and never

8:25

checked whether it was needed. And the

8:26

system allowed it because the

8:28

improvement looked good on paper. These

8:31

are not edge cases. They're the normal

8:33

failure mode of software at scale. Each

8:35

individual decision was defensible and

8:38

each engineer was competent. The

8:40

failures emerged from context gaps that

8:42

an individual could not bridge. Now I

8:44

want to introduce a frame that I think

8:46

is underappreciated in the AI and

8:48

architecture discourse. We humans have a

8:51

fundamental cognitive constraint.

8:53

Working memory. The research here is

8:55

very well established. We can hold four

8:57

to seven chunks of information in our

8:59

heads. This is not a training problem.

9:01

It's not something you can overcome with

9:03

experience. It's a structural

9:04

limitation. This matters enormously for

9:07

architecture because good architectural

9:09

reasoning requires holding multiple

9:12

concerns simultaneously. Performance

9:14

implications, security considerations,

9:16

maintainability, the existing patterns

9:18

in the codebase, the downstream effects

9:21

on other teams, even a moderately

9:23

complex architectural decision might

9:24

involve a dozen relevant considerations.

9:27

We don't hold them in our heads well at

9:29

once. And so we tend to use abstractions

9:32

to cycle through and build mental models

9:34

and understand how to think. And we're

9:36

actually very good at that. And good

9:38

architects simplify and build

9:40

abstractions to understand complex

9:42

systems very very well. The problem is

9:46

that we are all relying on our own

9:48

mental hardware to do that. We're not

9:50

all equally good at it. And abstractions

9:53

only scale so far if you're doing them

9:55

in your head. So when a human reviews

9:57

code, they can either zoom in on the

9:59

local change or zoom out, but they have

10:02

trouble doing both with equal fidelity.

10:04

And this is why code review will often

10:06

catch bugs, but miss architectural

10:09

regressions. Now, zoom way out. Look at

10:12

what happens at the scale of a business.

10:14

Large engineering teams are essentially

10:16

distributed cognitive systems.

10:18

Individual engineers hold fragments of

10:20

the total system knowledge.

10:22

Communication overhead grows

10:24

quadratically with team size and context

10:26

transfer between engineers is extremely

10:29

lossy. So institutional knowledge decays

10:32

as people leave and just decays

10:34

inherently. The engineer who knew why

10:36

the weird caching pattern exists moved

10:39

on to another company a long time ago

10:42

and the documentation if it ever existed

10:45

is out of date. So this creates a very

10:47

predictable failure mode. Architectural

10:49

regressions that no single engineer

10:51

could have seen because seeing them

10:53

would have required synthesizing

10:55

information that was distributed across

10:57

the entire cognitive system. Research

10:59

from factory.ai frames this as the

11:02

context window problem for human

11:04

organizations. A typical enterprise mono

11:07

repo will span thousands of files and

11:10

millions of lines of code. The context

11:12

required to make good decisions about

11:14

the architecture of that code also

11:17

includes the historical context of how

11:19

the code was built, the collaborative

11:21

context like what are the team

11:23

conventions and the environmental

11:25

context. What are the deployment

11:26

constraints? No human can hold all of

11:28

this in their head. We cope by building

11:31

mental models that are necessarily

11:33

incomplete but that we hope are useful

11:36

abstractions. Here is where we need to

11:38

think carefully about what AI systems

11:40

actually are. And rather than relying on

11:43

intuition about what machines can and

11:45

can't do, we should look seriously about

11:47

at what modern large language models

11:50

when deployed with sufficient context

11:52

can do because they have a very

11:53

different cognitive architecture than

11:55

humans. They don't have the same working

11:57

memory constraints. They can hold a

11:59

200,000 token context window, maybe

12:02

150,000 words in a form of attention

12:05

that allows constant cross referencing

12:07

across that entire input length. Some

12:09

models now support context windows of a

12:12

million tokens or more that are usable.

12:15

This isn't intelligence in the human

12:17

sense. It's something different.

12:19

Comprehensive pattern matching across a

12:21

very large context window with the

12:23

ability to apply consistent rules

12:25

without fatigue or forgetting. Now look

12:27

at what that means for the entropy

12:29

problem. The examples I described

12:32

earlier, the hook adding global

12:33

listeners, the cache that breaks

12:35

silently, those are all cases where a

12:37

human making a local change cannot see

12:40

the global implications. An AI system

12:42

with the entire codebase in context or

12:45

retrievable on demand doesn't have the

12:48

same constraint. It can check whether a

12:51

hook pattern is being instantiated

12:52

hundreds of times. It can trace the

12:54

referential quality implications of

12:56

cache usage. It can analyze asynchronous

12:59

flows across an entire function. It can

13:01

check whether the operation being

13:03

memoized is actually expensive. More

13:05

importantly, it can do this consistently

13:08

every time without deadline pressure,

13:10

without expertise validations, without

13:11

the knowledge walking out the door when

13:13

an engineer changes teams, without the

13:15

cognitive fatigue of reviewing your 47th

13:18

pull request of the week. Now, the

13:19

Versel team has begun actioning on this.

13:21

They they are distilling over a decade

13:23

of React and Nex.js optimization

13:26

knowledge into a structured repository.

13:29

40 plus rules across eight categories

13:32

ordered by impact from critical to

13:34

incremental. Uh critical would be

13:35

eliminating waterfalls. Incremental an

13:37

example would be an advanced technical

13:39

pattern. The repo is designed

13:41

specifically to be queriable by AI

13:43

agents. And when an agent reviews code,

13:45

it can reference those patterns. And

13:47

when it finds a violation, it can

13:48

explain the rationale and show the fix.

13:51

The observation matches what I've seen

13:53

across other orgs. Most performance work

13:56

fails because it starts too low in the

13:59

stack. If a request waterfall adds over

14:02

half a second of waiting time, it

14:04

doesn't matter how optimized your calls

14:06

are. If you ship an extra 300 kilobytes

14:08

of JavaScript, shaving microsconds off

14:11

the loop doesn't matter. You're

14:12

essentially fighting uphill if you don't

14:15

understand how optimizations actually

14:17

work in a stack. The AI can enforce a

14:20

priority ordering that's consistent and

14:22

it will not get tired of reminding

14:24

people about how leverage works in

14:28

technical systems and how larger goals

14:30

like faster page load can actually be

14:32

accomplished inside a set of technical

14:36

rules for how we construct our systems.

14:38

So let me enumerate these categories

14:40

more precisely because I think the

14:41

specificity is helpful. First, AI has a

14:44

structural advantage when we can apply

14:46

consistent rules at scale. Humans are

14:49

not going to check 10,000 files against

14:51

a set of principles with the same

14:52

attention they'd give 10. That's not

14:55

true with AI. AI can apply identical

14:58

scrutiny to every file. And this matters

15:00

for ensuring consistent error handling

15:02

patterns, checking that all API

15:03

endpoints follow conventions, etc. AI

15:06

has a structural advantage around global

15:08

local reasoning, the cathedral and brick

15:10

problem, right? AI can reference

15:12

architectural documentation while

15:14

simultaneously examining line by line

15:16

changes, maintaining both levels of

15:19

abstraction simultaneously in a way our

15:21

brains don't do well. This is sort of

15:23

like peripheral vision. It's like seeing

15:24

the forest and the trees at once. And a

15:27

human reviewer doesn't do that. They

15:28

zoom in or zoom out. And AI can do both

15:30

in the same pass. Pattern detection

15:32

across time and space. AI systems with

15:35

access to version history and the full

15:37

codebase can identify patterns that span

15:39

the organization's entire experience.

15:41

Say this cache pattern has been misused

15:43

in this codebase three times before.

15:45

This type of waterfall was introduced

15:47

and later fixed. Humans cannot maintain

15:50

that degree of institutional memory. AI

15:52

can if the systems are built to surface

15:54

it. And that is a big question. It's a

15:56

question for humans. AI can teach at the

15:58

moment of need. This is perhaps the most

16:00

underappreciated advantage. When someone

16:02

writes a waterfall or a system, a good

16:05

system doesn't just flag that as an

16:07

architectural defect. It can explain why

16:09

that is a problem and show you how to

16:12

parallelize it so that you don't cause

16:14

page load issues on checkout. When they

16:16

break a cache, you can explain the

16:18

referential equality issue in a way that

16:20

a junior engineer can understand. This

16:22

education can be embedded in workflow

16:25

rather than relying on pre-existing

16:27

knowledge that may or may not be

16:28

current. And it's certainly more than

16:30

would ever be covered in onboarding. AI

16:32

has tireless vigilance. Humans under

16:34

deadline pressure, we just skip stuff.

16:36

Humans will context switch between

16:37

features. Humans reviewing their teenth

16:40

PR are going to be less sharp. Humans

16:42

let things slide when they're tired and

16:44

frustrated. So this is the larger

16:46

insight that I think the industry is

16:48

just on the edge of internalizing. There

16:50

are specific categories of architectural

16:53

reasoning where AI is not just helpful,

16:56

it is structurally superior to human

16:58

cognition because the task requirements

17:00

exceed human cognitive constraints. It

17:03

is not because the AI is smarter. It is

17:06

because the task is pattern matching at

17:08

scale and humans aren't built for that.

17:10

Now, where does the AI still fall short?

17:12

This is where we still need nuance. The

17:14

same reasoning that reveals AI's

17:16

advantages is going to expose its

17:17

limitations. And these limitations are

17:19

not temporary gaps. They're structural

17:22

features of AI systems, novel

17:24

architectural decisions. AI systems are

17:26

fundamentally tuned on existing code and

17:29

documentation. They excel at identifying

17:32

when code deviates from established

17:33

patterns. They are not good at inventing

17:35

new patterns. And you see that when

17:37

cutting edge engineers like Andre

17:39

Carpathy talk about not being able to

17:42

use AI to code net new things. AI

17:45

assistance is often limited to reasoning

17:48

from possibly relevant prior examples.

17:51

And if there are no prior examples, it's

17:54

going to be hard. Business context and

17:56

trade-offs. Architecture is not just

17:58

about what's technically optimal. It's

17:59

about trade-offs between competing

18:01

concerns around development velocity,

18:03

maintainability, consistency, and

18:04

flexibility. These trade-offs are

18:06

contextual and uh tied to organizational

18:10

constraints, tied to market pressure. An

18:12

AI can tell you that a pattern creates

18:14

technical debt, but it can't tell you

18:16

whether that's the right call or not.

18:17

Cross-system integration. Modern

18:19

architectures involve multiple systems,

18:21

often owned by different teams or or the

18:23

integration points often are not fully

18:26

documented in any single source the AI

18:28

can access. The engineers who know that

18:30

this service is maintained by X team

18:33

that ships on a different cadence are

18:35

going to have organizational context

18:36

that no code analysis can provide. The

18:39

person who remembers we tried this

18:41

integration before and it caused issues

18:43

during Black Friday has historical

18:45

context that's probably not accessible

18:47

to the AI. Judgment about good enough

18:49

architecture involves knowing when to

18:51

stop optimizing and technically superior

18:53

solutions that take 6 months aren't

18:55

necessarily better than adequate

18:57

solutions that ship now. The perfectly

18:59

clean architecture doesn't help if it

19:01

just exists on paper. This kind of

19:03

judgment requires understanding stakes

19:05

and risks and humans remain very very

19:07

good at it. the why behind existing

19:09

decisions. Code bases are archaeological

19:12

artifacts. They contain decisions made

19:14

under constraints that no longer exist.

19:16

An AI can see what the code does. It

19:19

often cannot infer why the decision was

19:21

made that way and can't distinguish

19:23

between loadbearing decisions and

19:25

historical accidents. Humans can't. So

19:28

what does all of this mean for how we

19:29

should actually think about deploying AI

19:32

assisted development systems? First,

19:34

please recognize that the value

19:36

proposition is specific. I have taken

19:38

time to go into the specifics of where

19:40

AI excels in architectural problems and

19:43

where it doesn't because I think that we

19:45

have to be specific if we want to

19:47

position AI as a useful tool in these

19:50

conversations. If you want to position

19:52

AI as a general purpose oracle, you're

19:54

not going to get very far. Second, the

19:56

patterns have to exist before AI can

19:58

enforce them. And I note that Versell is

20:00

taking the time to distill years of

20:03

performance optimization experience into

20:04

structured rules. They're not just

20:06

depending on the AI to derive those

20:08

rules from the codebase because they

20:09

know they're not consistently applied.

20:11

It takes preparatory work and commitment

20:14

to live out these principles with AI.

20:16

Third, the context problem is a hard

20:18

challenge. Even with a million token

20:20

context windows, enterprise code bases

20:23

can be 10 or 100 times larger than that.

20:26

And so the scaffolding required to

20:28

surface the right context for a given

20:29

decision is non-trivial. It requires

20:32

semantic search, progressive disclosure,

20:34

possibly a rag, possibly not, possibly

20:36

structured repository overviews. This is

20:39

where much of the engineering effort to

20:40

get a system like this ready would go.

20:42

Model intelligence is increasingly

20:44

commoditized. Context engineering is the

20:47

differentiator. And companies like

20:49

factory.ai and augment are building

20:51

entire products around the idea that you

20:53

need to surface the right context at the

20:55

right time in order to take full

20:57

advantage of model capability. I would

20:59

call out again that human judgment

21:01

remains irreplaceable even in those

21:03

systems. So novel decisions, business

21:06

context, cross-system integration. AI

21:08

can handle pattern matching. AI can

21:10

handle consistency enforcement if we set

21:12

it up to do so. Humans are still going

21:15

to need to be involved to do what we're

21:16

good at to make the judgment laden

21:18

decisions we need to. Our goal is simply

21:20

to put AI in the parts of the

21:22

architecture where humans were going to

21:24

lose to entropy. Anyway, fifth and

21:26

finally, the organizational implications

21:27

of all this are really interesting. If

21:29

AI can enforce architectural patterns

21:32

consistently, whose patterns are they?

21:34

How do you govern the rule sets? How do

21:36

you evolve them over time? How do you

21:38

handle disagreements between teams with

21:40

different architectural standards? Those

21:42

have often written under the surface.

21:44

Those have been implicit disagreements.

21:46

this conversation talking about hey how

21:49

AI can help us with enforcing

21:51

architectural principles at scale to

21:53

reduce entropy in our systems it's gonna

21:56

force teams that traditionally didn't

21:57

have to fight because their principles

22:00

could just stay separate to have larger

22:02

conversations and I think that's going

22:03

to be a new area of organizational human

22:07

alignment that we have to sort through

22:09

the pattern I keep seeing in

22:11

organizations is that we keep asking the

22:13

wrong question we keep asking where can

22:15

AI I autonomously and independently

22:18

drive on development. Maybe AI should be

22:20

shunted out of architecture altogether,

22:22

etc. I think we should ask more specific

22:25

questions about AI and technical

22:26

development. I think a good example of

22:28

that is what aspects of technical

22:30

architecture can we put AI against

22:33

because we notice as humans that we have

22:36

consistent weaknesses in these spaces.

22:38

That takes a lot of nuance, right? It

22:40

takes a lot of thoughtfulness to

22:41

understand AI is structurally superior

22:44

at maintaining context at scale and

22:46

humans are structurally superior at

22:48

judgment under uncertainty. And then you

22:50

have to think about where to apply that

22:52

in these systems. This is not a story

22:54

about replacement. This is a story about

22:56

complimentarity and about getting that

22:59

complimentarity right at scale and how

23:01

that requires understanding our actual

23:03

cognitive strengths as a species and

23:05

understanding our actual limitations and

23:07

designing AI systems that strengthen us

23:11

by addressing our weaknesses. And I'm

23:13

exposing that. I'm telling that story

23:15

here today because I believe that this

23:18

kind of conversation, this quality of

23:20

thinking is what we need not just for

23:22

engineers but for multiple different

23:25

departments in 2026. We need to be

23:27

thinking at this level in product, in

23:30

marketing, in CS, where do we have

23:32

cognitive blind spots? Where can AI

23:34

patch those? Where do humans still play

23:36

a role? That is the question of 2026.

23:39

And I think digging in a little bit into

23:41

an area where we have made some lazy

23:43

assumptions about architecture and how

23:45

AI works shows how rich the conversation

23:48

can be. The future of software

23:50

architecture is not human versus AI. It

23:53

is AI helping us with things like

23:56

entropy that humans were always going to

23:57

lose at while humans focus on some of

24:00

the creative and contextual work that AI

24:02

just can't touch. Understanding that

24:05

distinction and really deeply

24:07

implementing it helps organizations to

24:10

actually thrive in the age of AI instead

24:13

of to make lazy assumptions and just

24:16

struggle. There is no substitute for

24:18

turning on our brains and thinking

24:20

through issues at this level. And it is

24:22

not just engineers. I know I do dove

24:24

into the technical deep end here, but

24:26

everybody's going to have to think at

24:27

this level about how their systems work

24:30

in order to build effective partnerships

24:32

between AI and humans in 2026. X.

Visited 10 times, 1 visit(s) today